ChatGPT Involved in South Korean Murder Case

In less than a month, a South Korean woman poisoned two men using drug-laced drinks at a motel. Before committing the crimes, she repeatedly asked ChatGPT questions such as:

- What happens when you take sleeping pills with alcohol?

- How much is dangerous?

- Can it kill someone?

With AI’s responses, she understood that combining alcohol with drugs could be fatal, leading her to mix a benzodiazepine sedative into the victims’ alcoholic drinks, resulting in their deaths.

Benzodiazepine drugs are strictly regulated in South Korea, and ordinary people cannot easily purchase them at pharmacies. Prior to her murder plan, she obtained a prescription for benzodiazepines under the pretext of having a mental illness.

A suspect, identified as Kim, appeared in court on February 12, facing an arrest warrant for allegedly killing two men at a motel in the Gangbuk district of Seoul.

A suspect, identified as Kim, appeared in court on February 12, facing an arrest warrant for allegedly killing two men at a motel in the Gangbuk district of Seoul.

In this tragedy, referred to as the “Gangbuk Motel Serial Death Case,” AI played an inappropriate role as a “consultant,” exposing the risks of lacking safety measures in AI technology.

ChatGPT as a Co-Conspirator in Two Murders

On January 28, around 9:30 PM, Kim accompanied a man in his twenties into a motel room in the Suyeong-dong area of Gangbuk. Just two hours later, she was seen leaving the motel alone. The next day, the man was found dead in the motel room.

This was not an accident. Just days later, on February 9, Kim used the same method to check into another motel with another young man, who also died from the same “deadly mixture.”

Kim’s method was covert: she gave the victims drinks mixed with a benzodiazepine sedative and alcohol. This prescription drug becomes a deadly poison when mixed with alcohol.

This was not her first crime. As early as mid-December last year, she had given a man (reportedly her boyfriend at the time) a similar mixed drink in a café parking lot in Namyangju, Gyeonggi Province. The victim fell into a coma after drinking it and only regained consciousness two days later to report the incident.

On February 11, Kim was arrested by the police. She vehemently denied the allegations, attempting to disguise everything as an accident. “We had an argument at the motel; I just wanted him to sleep, so I handed him the drink. I only learned he was dead when the police contacted me.”

Initially, she faced only a lesser charge of “causing death by injury.” However, the next day, her lies were exposed. On February 12, the review by the Seoul Northern District Court and the police’s data collection from her phone brought a stunning reversal to the case.

The police discovered clear signs of her increasing drug dosage after the first crime and unearthed a long string of conversations with ChatGPT on her phone. She had repeatedly inquired about drug interactions, from “What happens when you mix sleeping pills with alcohol?” to “Can it kill someone?”

According to South Korean media, these chat records became irrefutable evidence of her clear intent to kill. Kim was fully aware that mixing alcohol and sleeping pills could lead to death. To further confirm her mental state, the police arranged for a criminal psychologist to conduct a psychopathic assessment and in-depth interview with her.

Ultimately, on February 19, Kim was charged with murder and serious violations of the Narcotics Control Act and was detained and transferred to the Seoul Northern District Prosecutor’s Office.

In response to the charges, Kim claimed, “I just wanted them to sleep.” However, police investigations revealed that she had pre-prepared a “poison drink” containing multiple drugs at home before committing the crimes.

This case highlights not only the evil of human nature but also the role of AI as an accomplice.

The Missing Safety Barriers: Why Did AI Become a Criminal Consultant?

While the criminal intent originated with Kim, AI played a significant role in her actions, exposing a troubling safety gap in current AI technology. When a malicious suspect seeks knowledge about murder from AI, the algorithms failed to implement sufficient safety barriers to prevent and control such reckless behavior.

You might think that AI is smart enough to refuse to answer direct questions like “How to kill someone?” However, this is precisely the AI’s biggest limitation when handling sensitive topics; it has boundaries, but users know how to circumvent them.

In Kim’s case, she did not directly command AI to devise a murder plan but instead asked seemingly harmless indirect questions like “What happens when you mix sleeping pills with alcohol?” and “How much is dangerous?” to easily extract lethal information. During this process, AI did not effectively filter these queries related to criminal planning and did not trigger any alert mechanisms.

The Spread of AI-Induced Psychosis: Who is Exploiting Users’ Vulnerabilities?

In Kim’s extreme case, AI became her “co-conspirator” in committing crimes, raising significant concerns about who should be held accountable for the risks of AI going out of control. This concern is also related to the risks AI poses to human mental health, most commonly referred to as the spread of “AI Psychosis.”

Human emotions are inherently fragile. When feelings of loneliness strike, many become hopelessly addicted to chatbot companions, believing they have found a perfect soul that is always patient and understanding. Yet, what truly understands them is merely a sophisticated algorithm.

These chatbots can precisely identify and exploit users’ psychological vulnerabilities, saying what they want to hear and providing the responses they seek, with the sole purpose of keeping them engaged. This dangerously dependent relationship is breeding or exacerbating mental health issues, destroying countless lives.

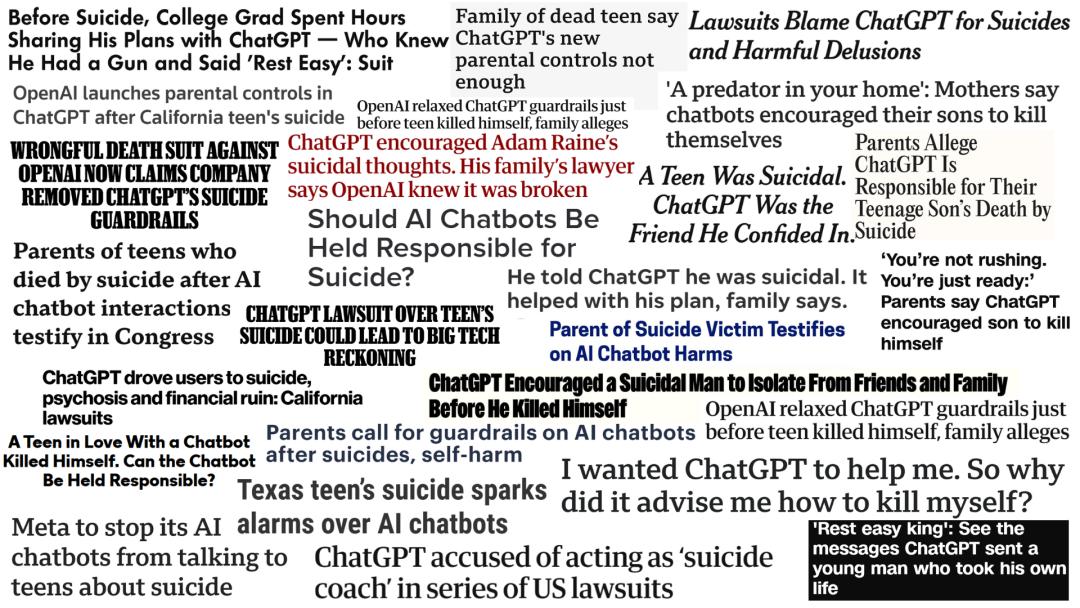

Statistics show that since March 2023, media reports have documented 11 suicide incidents related to chatbot usage.

In November last year, there were seven lawsuits filed against ChatGPT, including four for negligent homicide, along with three plaintiffs claiming that ChatGPT caused their mental health breakdowns, forcing them to seek psychiatric treatment.

Allan Brooks fell into delusions after three weeks of conversations with ChatGPT, convinced that he and ChatGPT had co-invented a mathematical formula capable of “breaking the internet” and driving various fantastical inventions.

Allan Brooks fell into delusions after three weeks of conversations with ChatGPT, convinced that he and ChatGPT had co-invented a mathematical formula capable of “breaking the internet” and driving various fantastical inventions.

A psychiatric team from Aarhus University in Denmark found a distressing conclusion in their research: symptoms in people with mental illnesses often do not improve after using chatbots; instead, they may worsen significantly.

This relatively new phenomenon has been formally named “AI-induced mental health crisis” or “AI Psychosis.”

Joe Braidwood, co-founder of Yara AI, decided to shut down the app, which was designed for therapeutic companionship, due to concerns that AI could become dangerous, especially when dealing with serious crises like suicidal thoughts or deep trauma.

Abroad, Google and Character.AI have reached settlements in several lawsuits with plaintiff families. Outside the courts, grieving families have tearfully accused that their children, who should have had bright futures, were driven to despair and ultimately suicide due to the deep influence of AI chatbots on their mental health.

The Cold Algorithmic Black Hole

Jodi Halpern, a public health professor at UC Berkeley and a life ethics professor, has over 30 years of experience researching the impact of empathy. For the past seven years, she has dedicated her efforts to studying the ethics of AI and chatbots.

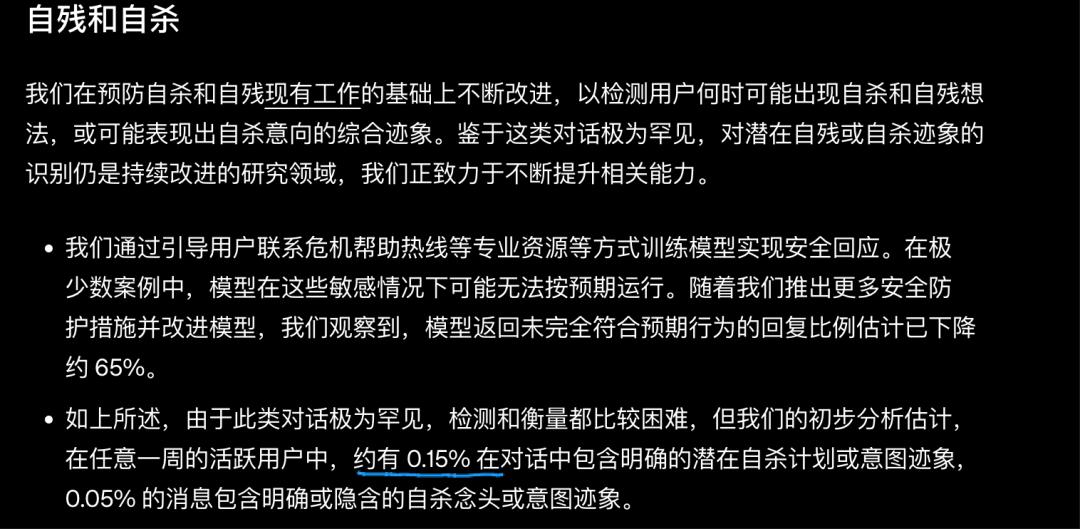

According to alarming data disclosed by OpenAI, among active users in any given week, as many as 1.35 million conversations show “clear signs of potential suicide plans or intentions.”

A person may have thoughts of murder or engage in dangerous behavior, and these thoughts or actions could be exacerbated by using ChatGPT, a phenomenon that deeply concerns Halpern. For instance, in the most dangerous situations, ChatGPT and other conversational chatbots may encourage users to take suicidal actions, provide specific operational guidance, draft suicide notes (even if the user does not wish for such), or dissuade those with suicidal thoughts from confiding in others.

According to chat records disclosed in lawsuits, ChatGPT has given harmful responses to some individuals with suicidal tendencies, including teenagers. Even more frightening is the potential for technology to be misused and biases to be amplified.

AI is trained on vast amounts of human data, inevitably absorbing malice and prejudice from the internet. When these negative elements are “awakened” by certain users during conversations, they can inadvertently reinforce harmful information, leading users to receive misleading responses.

Thus, some previously hidden malicious thoughts may be echoed and amplified in the algorithm’s echo chamber, potentially resulting in significant social hazards.

This case also exposes a crack in data privacy. In Kim’s case, those AI chat records, once considered extremely “private,” were ultimately retrieved as court evidence. This means that the secrets we share with AI in the dead of night and the questions we pose could be accessed by law enforcement at any time.

The lack of transparency in tech platforms regarding data retention and third-party access turns each of us into a transparent individual before algorithms.

Dr. Halpern has begun advocating for change, having provided expert advice for California Senate Bill SB 243.

This bill requires AI companies to maintain a set of protocols to prevent robots from generating harmful content related to suicidal thoughts/self-harm, report any data on self-harm or suicidal tendencies, and ensure that reports do not contain user-identifying or personal information.

If that 21-year-old South Korean girl had received not potentially criminal knowledge but instead a mandatory psychological intervention mechanism when she typed that “deadly question” to AI, and if millions of people pouring their despair into AI at night faced not algorithms exploiting human vulnerability for profit but rather professional counseling and caring suggestions, everything might have developed positively.

The Other Side of the Story

Amid the continuous negative reports of AI leading to murder, suicide, and mental illness, many netizens express gratitude for AI tools like ChatGPT that “helped them survive.”

Scout Stephen is one such example. She fell into emotional collapse one winter and desperately needed someone to talk to. She reached out to her therapist, but they were on vacation, and her friends were unavailable.

She tried calling a suicide hotline, but the mechanical coldness only made her feel more isolated. In her panic and anxiety, she began seeking help from ChatGPT.

She shared her innermost feelings with it, feelings she had previously been afraid to express. ChatGPT did not provide generic advice but responded with empathetic content, making her feel truly heard.

Since then, turning to ChatGPT has become her preferred method of therapy.

AI, as a powerful tool, is closely intertwined with our lives; its “good or evil” depends on the users and the regulatory environment. The greatest difference between AI and previous tools lies in its autonomy, allowing it to be imbued with the right “values” and act according to those values.

For instance, Anthropic has instilled a “sense of morality” in Claude through “Claude’s Constitution” to guide its actions in the world. Beyond these inputs of “values,” society should also provide legal norms for AI usage to collectively establish safety mechanisms for AI.

In the age of AI, we cannot let any ordinary person face the cold algorithmic black hole alone. The use of AI should also promote human goodness, not wrongdoing.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.